How to Engineer Secure Things: Past Mistakes and Future Advice

Things are all the rage right now — more specifically, connected and embedded things. As a result of the increasing demand for connectivity and the decreasing cost of hardware, engineers and developers are pushing computing power and connectivity into everyday things — refrigerators, light bulbs, TVs, cars, and other devices. Companies are under constant pressure to send things out quickly and into the hands of users. Security often becomes an afterthought. To say Internet of Things security is often lacking is an understatement.

IoT is growing — and it’s ripe for abuse.

As a person interested in software security and hardware, I frequently see intersections between the two that make me think, laugh, and sometimes cry. Having spent several years working in the security industry with a previous stint in embedded security research and development, I‘ll examine three high-level security mistakes that frequently make the news, and provide advice to security-conscious product developers that will (hopefully) reduce the chance the same news becomes about them.

Mistake #1 – Insecure Updates

The connected nature of these devices means updates can be delivered quickly and cheaply using the built-in uplink to pull down the latest firmware updates. However, this convenient functionality is frequently targeted by attackers; compromising the update package can mean instant on-chip execution. No amount of software permissions or protections can stop a malicious firmware update. There are various ways the image can be tampered with, ranging from update server compromises to man-in-the-middle attacks over Wi-Fi, but the fix is typically the same. A device root of trust (RoT) and code-signing of each delivered image can ensure that the firmware stays intact and untampered with, as well as ensuring that it originates from a reputable source (i.e., the developer). Also, check that the security controls are implemented correctly to respond to an update failure, and fail closed rather than open (safety requirements notwithstanding, more on this later).

For an example of this mistake in recent news, consider the cautionary tale of the TrackingPoint rifle. This rifle had an update process that failed to properly validate new firmware, allowing security researchers to gain root access on the system. Normally the phrase “remote root access” is bad enough, but this was made far worse because the target device was a working firearm that could suddenly be controlled by a third party. The researchers stressed that the trigger must be pulled by a human, so kudos on that design decision.

In short, updates need to be cryptographically validated, and that validation needs to be implemented correctly.

Mistake #2 – Insecure Cryptography

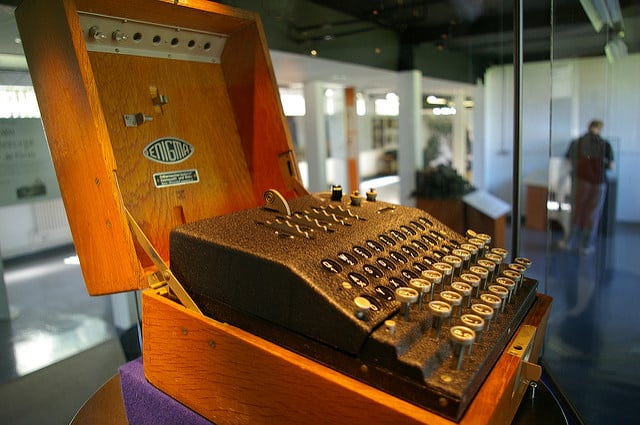

Let’s be honest — cryptography is hard. There’s a reason it was the domain of shadowy government organizations for so much of history; even today, deep cryptanalysis and correct algorithm design is the realm of PhD-level mathematicians and scientists.

Regardless, each device needs to implement cryptography correctly — and use the right tool in the process.

Fortunately for the rest of us, cryptography is more ubiquitous than ever before. Military-grade tools and guidance are available to the public for securing communications. Carefully follow the guidance provided by organization such as the NSA and NIST (or numerous others), and ensure that the information is known and used early in the design process.

The following are a few guidelines for applying cryptography in Internet-connected devices.

Encrypt Sensitive Communications

Verify that any sensitive information is encrypted once it leaves the device. The operating environment is most likely unknown, thus it should be treated as hostile and data should be protected accordingly.

Failure to do so results in events like this smart TV situation, where it was discovered that TVs sent users’ viewing habits over the Internet in the clear, or this incident from early 2016 where a security system did the same with user passwords.

Reject Self-Signed or Untrusted Certificates and Chains

Having encryption won’t do much, though, if anyone can apply it to your communications. The device RoT should be used for all encrypted communication chains, and any part of the chain that fails verification should be rejected. For example, this write-up details how another smart TV used encryption during communications, but accepted certificates from any remote source. This made it so self-signed certificates could be easily injected to gain access to the communications stream.

Use Peer-Reviewed Algorithms

As stated earlier, cryptography is hard. It’s a well-known rule that creating custom and non-peer-reviewed cryptographic algorithms is a bad idea – yet, it still happens . “A Practical Cryptanalysis of the Algebraic Eraser” shows how weaknesses in a lightweight, IoT-specific algorithm led to a practical attack against the protocol that renders defenses useless. For best results, stick with well-known and well-tested cryptography in well-known and well-tested libraries.

Verify That Random Numbers Are Securely Generated and Used Correctly

Random numbers are intrinsic to cryptography; considerable effort goes into making sure these numbers are truly random (as opposed to pseudo-random). These random numbers are used for anything from temporary session keys to algorithm components. Generating or using them incorrectly can lead to disclosure of the communications stream or of the keying material. This presentation snippet by failOverflow illustrates how the Sony PlayStation 3’s private key was calculated due to failure to use random numbers during Sony’s signing process.

Generate New Secret Keying Material For Individual Devices

This problem has appeared in the media recently and not simply in the IoT space. If keying material is supposed to stay secret, and the same keys are distributed across every produced device, how exactly is keying material expected to stay, well, a secret?

The hardware and software that hides keys on devices is generally well-trusted, but it’s only a matter of time until keys can be extracted. There are a multitude of ways to extract the memory contents of chips, even when debug interfaces are turned off in production devices. An in-depth examination of how attackers can retrieve memory from hardware is a topic for another time (see Security Engineering by Ross Anderson, specifically Chapter 16 for some interesting techniques), but it’s safe to assume that any shared secret key won’t stay a secret forever. There is some expensive and purpose-built hardware designed to resist these types of attacks and securely store keying material, but general purpose chips don’t offer much protection. The affordable way to mitigate these attacks is to store only shared public and non-shared private keying material on the device.

Reused keys on router firmware or on Wi-Fi enabled lightbulbs are both cases of IoT security failures on this front. Both are the same issue with the same outcome: All devices that use the same key are susceptible to undetectable man-in-the-middle attacks.

Mistake #3 – Fundamentally Insecure Design

Fundamentally insecure design is an all-encompassing term used to describe design choices that make sense from a feature – but not a security – standpoint. You may have noticed an increasing number of “car hacks” in 2015 where researchers remotely commanded and controlled consumer vehicles.

Attackers can enter these systems in numerous ways, but they all suffer from the same problem: over-connected systems, which stem from a failure in the design process to recognize how to control information flow from lower-trust areas to higher ones. This is essentially a fancy way of saying that the parts controlling the car are connected to the parts that consumers use for reasons unrelated to safety (like the sound system).

Imagine that one requirement for a commercial jetliner is that passengers can view the current airspeed, heading, and altitude from the cockpit directly through the in-flight infotainment system at each passenger’s seat. This information is then periodically published on the network, sourced from the aircraft’s navigation sensors and destined for both flight control systems and passenger displays. Lacking certified and accredited controls to prevent the flow of information from passenger systems to flight control systems, this design represents an enormous risk to the operational safety of the aircraft, as low-trust information from passenger displays can be used to influence high-trust decisions made by the flight control systems. In a correctly designed system, such a thing would be impossible.

Now, while this scenario seems like a grievous flaw in an aircraft design (and it is), it’s not hard to imagine a similar mistake in a less safety-oriented system, such as a consumer automobile. This is essentially what happened in those car hacks that kept making the news last year. The passenger systems (many of which were Internet-connected) were connected directly to the automobile control systems, and allowed commands and data to be sent and received. The solution here is to have a formal model applied to the system in the design phase that will limit communications and recognize the amount of trust placed in any given data (for example, the Biba Integrity Model). Based on the model, controls can be applied as necessary to meet the communications requirements of the system.

This problem is more specific to IoT devices than traditional software because we may rely on these devices to keep us safe and out of harm’s way. Recognizing the safety-critical systems — and designing and implementing accordingly — is necessary. Safety-critical engineering and software design may be an expensive part of the device design process, but it’s a necessary part.

Closing Thoughts

Security is never a one-off feature intended to simply check a box, but an ongoing part of the product lifecycle. My advice relates to embedded devices; the references and stories included serve as examples of how things went wrong, the impact, and in many cases, how things were eventually fixed.

These problems have been seen for years in the traditional software industry. Their introduction into the IoT space presents a challenge for a workforce that has traditionally been insulated from many information security concerns due to their system’s unconnected nature.

Like software developers before them, device engineers must learn how to properly secure their newly connected devices and identify early in the product lifecycle when a design or implementation choice will have potentially disastrous consequences.

Here’s to hoping that one day I’ll have to look harder for these stories.

Have questions or comments for the Bishop Fox research team? Send us a message and we'll be in touch.

Enigma Machine Photo Credit: Tim Gage via Source / CC BY-SA

Newspaper image: Brian A. Jackson/Shutterstock

Subscribe to Bishop Fox's Security Blog

Be first to learn about latest tools, advisories, and findings.

Thank You! You have been subscribed.

Recommended Posts

You might be interested in these related posts.

Apr 02, 2024

Technology and Software: 2023 Insights From the Ponemon Institute

Apr 01, 2024

Practical Measures for AI and LLM Security: Securing the Future for Enterprises

Mar 12, 2024

Implementing the FDA's 2023 Requirements for Medical Device Cybersecurity

Feb 28, 2024

Unlocking Job Opportunities with LinkedIn and Artificial Intelligence